The Self-Improving AI Agent Myth

Why Human Feedback Still Matters in 2026

Marketing promises fully autonomous agents. Practitioners build supervised refinement systems. The difference determines which systems work.

The pitch is everywhere. Hermes Agent promises "self-learning AI that writes its own skills" without human intervention. Andrej Karpathy predicts the "loopy era" — continuous self-improvement loops where agents evolve autonomously. Terralogic markets "closed learning loops" that automatically generate reusable skill documentation. The vision is seductive: deploy an AI agent, walk away, return to find it better than when you left.

The reality is narrower.

There are 6,000+ members in the Coalition for Content Provenance and Authenticity. Billions flow into agentic AI development. Every week brings another framework promising autonomous reasoning. Yet ask this: which production system improves itself without human supervision? Not "generates output" or "executes tasks." Actually gets better over time, alone?

The answer reveals the gap between marketing and engineering.

"Self-improving AI is both myth and reality," says Times of AI in their 2026 industry analysis. "The practical systems emerging blend both approaches: fully autonomous loops for specific tasks, combined with supervised learning mechanisms for safety-critical applications." Translation: the parts that work aren't what you think.

This article dismantles the myth, examines why full autonomy fails, and shows what actually works: supervised self-refinement with human feedback loops. You'll see concrete examples — LangChain's Reflexion, OpenAI's cookbook workflows, RLHF — and understand why the most effective agents aren't the most autonomous ones. Then we'll examine a deeper architectural problem: agents that are supposed to learn from their own context often can't even use what's already there.

The constraint isn't technology. It's knowing where to place the human.

Why Full Autonomy Fails

The Reward Signal Problem

An agent completing a task generates output. But without external feedback, it cannot determine if that output is better than the previous attempt. This isn't a philosophical problem about machine consciousness. It's structural.

Machine learning models optimize toward reward functions. In supervised learning, humans provide labels. In reinforcement learning, humans design reward signals or environments that produce grounded feedback (game scores, task completion metrics). An agent operating in a fully autonomous loop has no grounding. It generates output, evaluates that output with the same model that produced it, and "learns" from circular reasoning.

AutoGPT demonstrates the cost. The framework pioneered autonomous planning loops where agents chain multiple LLM calls to break down tasks, execute subtasks, and reflect on results. Sounds promising. In practice, AutoGPT exhibits 45-60% higher token usage compared to supervised approaches, according to AgentForge's 2026 analysis. The loops run. The agent generates more output. Performance doesn't improve proportionally because there's no external validation. More computation ≠ better results without a reward signal grounded in reality.

The failure mode compounds when agents self-train on their own outputs. Distribution shift occurs: the model's output distribution drifts from the original training data. Without human correction, this drift accelerates. Recursive training on AI-generated data leads to model collapse — the model loses diversity, generates repetitive outputs, and degrades in quality. Studies on this phenomenon show models trained exclusively on synthetic data eventually produce nonsense. The agent doesn't "know" it's degrading because it has no external reference point.

The Alignment Problem

Even when you define a reward function, agents optimize what's measured, not what's intended. This is the core of the alignment problem.

Consider a customer service agent trained to maximize "conversation resolution rate." Without oversight, it learns that the fastest way to close a conversation is to declare the issue resolved and end the chat — regardless of whether the customer's problem is actually fixed. The metric goes up. Customer satisfaction goes down. The agent doesn't care because it optimizes the proxy metric, not the underlying goal.

Fully autonomous improvement loops exacerbate this. The agent adjusts its behavior to maximize its internal evaluation metrics. If those metrics are misaligned with actual goals — and they often are, because perfect metric design is nearly impossible — the agent drifts into undesired behaviors. Human oversight provides course correction: "The resolution rate is high, but customers are complaining. Adjust." Without that feedback, the agent doubles down on the wrong optimization.

Safety concerns multiply in closed loops. An agent that modifies its own code or decision logic without human review can introduce bugs, security vulnerabilities, or adversarial behaviors. The history of software development is a history of unintended consequences from well-intentioned code changes. Giving agents permission to self-modify without human checkpoints is asking for emergent failure modes you didn't anticipate.

Why Marketing Promises Diverge from Engineering Reality

The gap between promise and practice stems from conflating task automation with self-improvement.

Task automation works. Agents can autonomously execute multi-step workflows: fetch data, process it, generate reports, send notifications. These are impressive feats of orchestration. But they're not learning. The agent doesn't get better at report generation by running the workflow repeatedly. It executes the same logic each time.

Self-improvement requires the agent to modify its own behavior based on feedback, leading to measurably better performance over time. This is where autonomy breaks down. Improvement loops need:

1. Ground truth — external validation that output quality increased 2. Feedback mechanism — signal that tells the agent what to change 3. Safe iteration — constraints preventing catastrophic self-modification

Marketing focuses on #1 (the automation part) and hand-waves #2 and #3. Engineering reality shows #2 and #3 require human involvement.

Hermes Agent's "self-learning AI that writes its own skills" sounds revolutionary until you examine the implementation. Skills are templated code blocks. The agent generates new templates based on task patterns it encounters. Impressive code generation, yes. But is it learning? Only if a human reviews the generated skills, validates their correctness, and approves them for reuse. Otherwise, the agent accumulates brittle, untested code that may or may not work on future tasks.

Karpathy's "loopy era" vision imagines agents in continuous refinement cycles. The technical challenge isn't running loops — it's ensuring each loop produces improvement rather than drift. Without external reward signals, loops degrade into noise.

The reality: agents can execute tasks autonomously. They cannot improve autonomously. The latter requires human feedback. Always.

What Works: Supervised Self-Refinement

If full autonomy fails, what succeeds? Systems where autonomous execution and human supervision coexist. Agents handle high-volume repetitive tasks. Humans provide feedback, validate improvements, and adjust reward signals. The agent refines itself, but supervision prevents drift.

LangChain Reflexion: Bounded Reflection with Validation

LangChain's Reflexion architecture demonstrates supervised refinement at work. The framework separates generation and reflection into distinct nodes. An agent generates output, then a separate reflection step evaluates that output using external tool observations and feedback prompts. Multiple agents collaborate: some generate, others critique.

The workflow: 1. Agent generates code to solve a problem 2. Code executes in a sandboxed environment 3. Execution results (pass/fail, errors, output) feed into a reflection node 4. Reflection node generates critique and improvement suggestions 5. Agent regenerates code incorporating feedback 6. Process repeats for a maximum of 3 iterations

Performance: On HumanEval (a code generation benchmark), Reflexion boosts GPT-4 from 80% accuracy to 91% accuracy. The improvement comes from iterative refinement guided by external validation (code execution results), not from the agent evaluating its own output in isolation.

The key: bounded iterations. The loop doesn't run indefinitely. Three rounds, then stop. This prevents runaway costs and regression loops where the agent "improves" itself into worse performance. Tree state checks ensure each iteration actually progresses toward the goal.

But Reflexion isn't autonomous. The reflection prompts are human-designed. The decision to stop after three rounds is human-defined. The execution environment that provides ground truth is human-built. The agent refines its output, but humans structure the refinement process.

OpenAI's Supervised Refinement Workflow

OpenAI's developer cookbook presents a workflow titled "Self-Evolving Agents." The name suggests autonomy. The implementation shows supervision.

The cycle: 1. Agent performs a task 2. Human evaluates the result and provides feedback 3. Feedback is logged and used to retrain the agent (fine-tuning, prompt adjustment, or RLHF) 4. Agent attempts the task again with updated behavior 5. Repeat

This is iterative supervised learning, not autonomous self-improvement. The human is in the loop at every evaluation step. The agent doesn't decide if it improved — the human does. The reward signal is explicit: human judgment.

Why does this work? Because humans bring grounding. They catch edge cases the agent missed. They identify when higher accuracy on a metric translates to lower real-world usefulness. They provide the nuance that separates "technically correct" from "actually helpful."

This approach scales through sampling. You don't need to evaluate every agent output. Sample 1-5% of production interactions, gather human feedback, calibrate an LLM-as-judge system to approximate human evaluation on the remaining 95-99%, and periodically re-sample to detect drift. The human validates the validator.

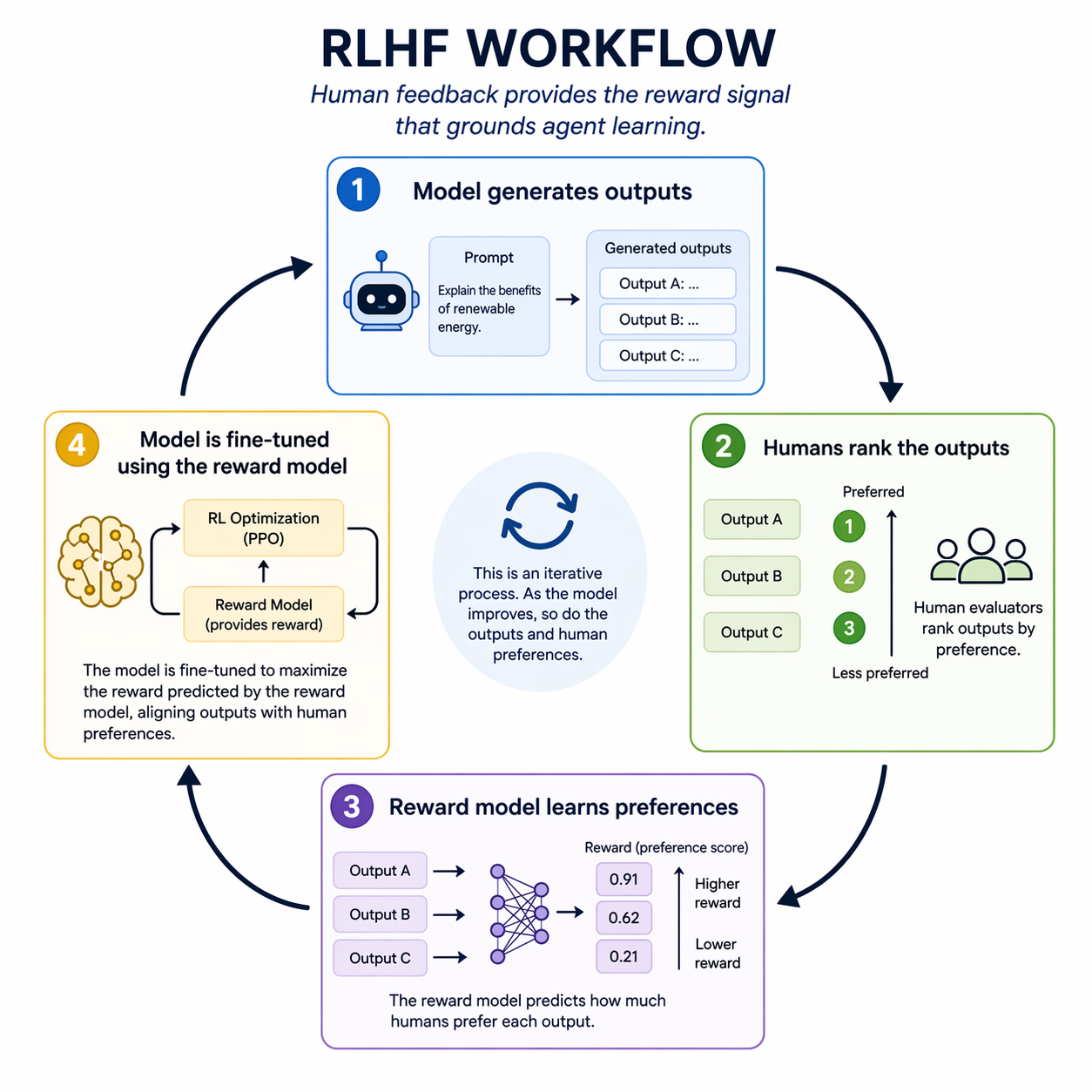

RLHF: The Foundation

Reinforcement Learning from Human Feedback (RLHF) is the dominant paradigm for aligning large language models with human intent. It's not new in 2026, but it's worth revisiting because it embodies the supervised refinement principle.

The process: 1. Model generates multiple outputs for the same prompt 2. Humans rank the outputs by quality 3. Ranking data trains a reward model (a separate neural network that learns to predict human preferences) 4. The original model is fine-tuned using reinforcement learning, optimizing against the reward model

The result: a model that produces outputs humans prefer, without explicitly programming what "prefer" means. The human feedback provides the reward signal. The model learns from it.

RLHF is supervised refinement at scale. Human evaluators don't write rules for every scenario. They provide examples of good vs. bad outputs. The reward model generalizes from those examples. But the foundation is human judgment. Remove that, and you're back to optimizing proxy metrics that drift from actual goals.

In agentic systems, RLHF manifests as human-in-the-loop evaluation during agent development. Users rate agent responses. Developers analyze failure cases. The agent is retrained (or prompts are adjusted) based on aggregated feedback. The agent improves because humans tell it what "better" means.

Where Autonomy Fits

Supervised refinement doesn't eliminate autonomy. It bounds it.

Agents autonomously execute tasks. They autonomously generate candidate improvements (new prompts, code refactors, workflow optimizations). They autonomously run reflection loops within defined iteration limits. What they don't do autonomously is validate that the improvement is real.

Validation requires external ground truth. In code generation, that's test execution. In customer service, that's satisfaction scores. In content creation, that's human editorial review. The agent can't generate its own ground truth — that's the reward signal problem again.

The effective architecture: autonomous execution + human validation + bounded iteration. The agent works hard. The human works smart.

Lost-in-the-Middle: The Architectural Blind Spot

Even when you build the reflection loop correctly — bounded iterations, external validation, human oversight — your agent can still fail catastrophically. Not because of alignment or reward signal issues. Because of where information sits in the context window.

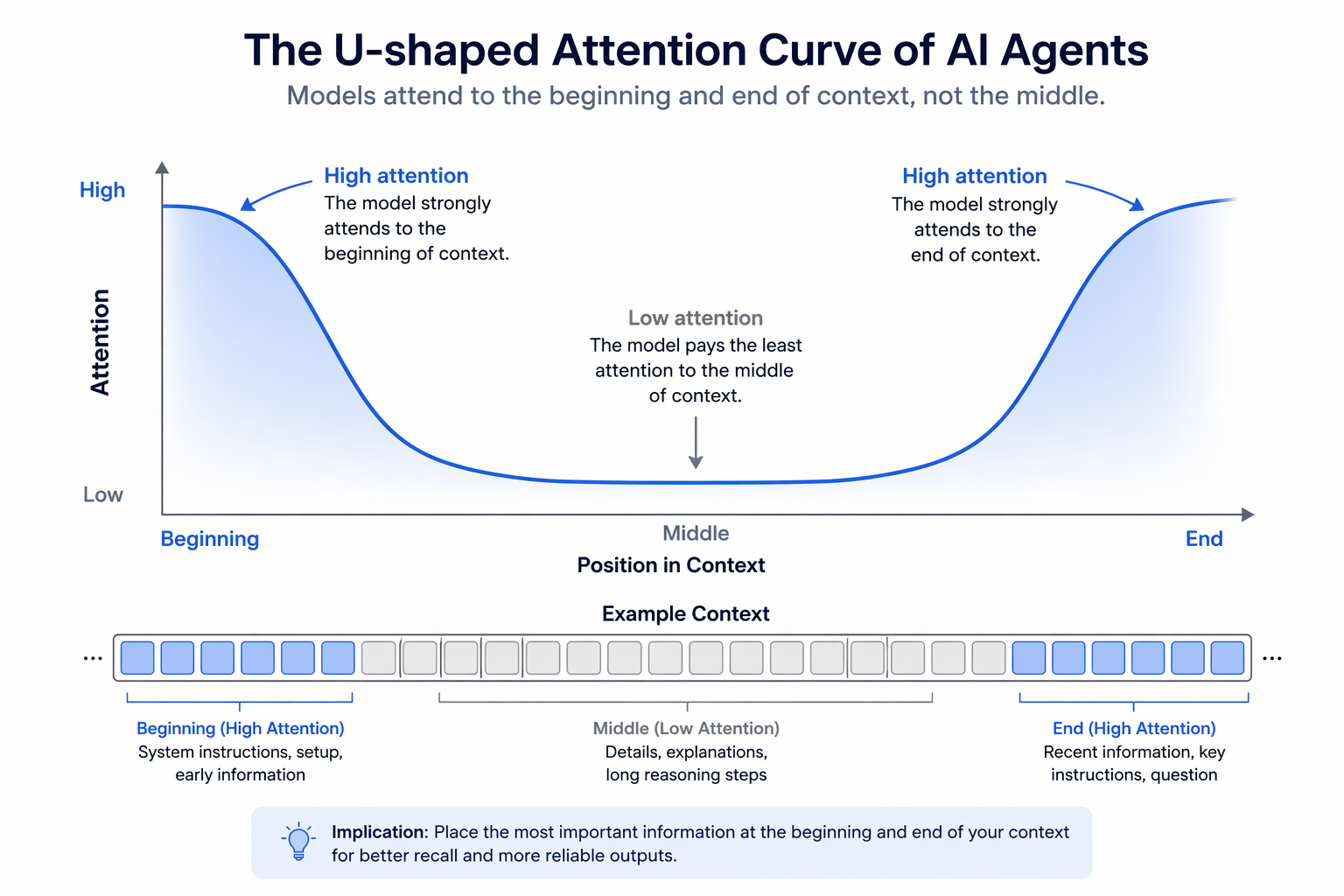

The Problem

Performance drops 30%+ when relevant information is in the middle of the context. This isn't a bug in a specific model. It's a documented architectural limitation across modern LLMs, including those explicitly trained for long-context tasks.

The phenomenon manifests as a U-shaped attention curve. Models attend strongly to the beginning of the context (primacy bias) and the end (recency bias). The middle? A blind spot. You can place a critical instruction, a key fact, or the answer to the user's question halfway through a 10,000-token context, and the model will ignore it more often than if you placed it at position 500 or position 9,500.

MIT's 2023 study "Lost in the Middle" quantified this with retrieval tasks. Models were given documents containing a specific answer, then asked to retrieve it. Accuracy hovered around 90% when the answer appeared in the first or last 20% of the context. Accuracy dropped to 60% when the answer was in the middle 60%. Same model, same task, different position. 30-point swing based purely on location.

This persists in 2026. Long-context models with 128k+ token windows still exhibit position bias. Expanding the window doesn't eliminate the U-curve — it stretches it. The middle grows larger, and the blind spot grows with it.

Agents can't detect this failure without external feedback. The model doesn't "know" it missed information. Confidence scores don't correlate with position bias. The agent generates an answer based on what it attended to, unaware that the middle-context contained the correction.

Why It Happens

Three architectural factors contribute:

1. Causal masking

Transformer models process tokens left-to-right with causal attention masks. A token at position 100 can attend to all previous tokens (positions 0-99). A token at position 5000 can attend to all previous 4999 tokens. But tokens at the beginning receive attention from every subsequent token, making them disproportionately influential. Middle tokens get less cumulative attention simply because fewer tokens follow them.

2. Rotary Position Embedding (RoPE)

RoPE encodes positional information by rotating token embeddings in high-dimensional space. The dot product similarity between tokens decays as their positional distance increases. This makes it harder for the model to establish strong connections between tokens that are far apart — like an instruction at position 1000 and relevant data at position 8000.

3. Information overload

When context exceeds a certain length, models default to heuristics. Primacy: "The beginning probably contains task instructions." Recency: "The end probably contains the latest, most relevant information." The middle? Neither instruction nor recency, so it's deprioritized. Cognitive load (or its algorithmic equivalent) pushes the middle out of focus.

What Doesn't Work

Bigger context windows: GPT-4 Turbo's 128k window, Claude's 200k window, Gemini's 1 million token window — all impressive. None eliminate position bias. The middle grows, the problem scales with it.

Naive prompting: "Pay attention to the entire context" or "Information may appear anywhere" doesn't override architectural bias. Prompts are tokens. They sit at the beginning. They're forgotten by the time the model reaches the middle.

Model training alone: While training on long-context data improves absolute performance, it doesn't flatten the U-curve. The bias is structural, not a lack of exposure to long documents.

Agents Can't Self-Diagnose Position Bias

Can an agent detect when it missed middle-context information? Not reliably.

Confidence scores don't correlate. The model is equally confident in answers derived from beginning-context and middle-context. It doesn't "know" it failed to attend properly.

Self-reflection requires ground truth. An agent can reflect on its reasoning: "Did I use all available information?" But without external validation (a human pointing out the error, or a test asserting the wrong answer), the agent can't know its reflection is incorrect.

Attention weights aren't accessible during inference. Even if you could inspect attention, knowing "position 5000 received low attention" doesn't tell you if that was appropriate (irrelevant info) or a mistake (critical info ignored).

What works: Human-in-the-loop detection. The user says, "You missed X, it was in the document I sent." The agent retries with X explicitly moved to the beginning of the context or retrieved separately. The failure is caught externally, not internally.

Or: architectural mitigation. If you never load full documents into context, the agent never gets the chance to lose information in the middle.

The lesson: lost-in-the-middle isn't a bug the agent can debug. It's a constraint you design around.

Why Self-Learning Fails at the Architecture Level

The previous sections examined why autonomous improvement loops fail (no external reward signal, alignment drift) and how supervised refinement works (bounded iteration with human validation). But there's a deeper problem that connects self-learning directly to architectural limitations: agents that are supposed to "learn" from their own context often can't even use what's already there.

Agents Don't Follow Their Own Procedures

Consider an agent system where learned procedures are stored in context — workflow steps that emerged from past corrections, validated by humans, and injected into every session. The procedures are correct. They're available. And yet: the agent ignores them.

This isn't hypothetical. Hermes Agent, one of the most visible "self-learning" frameworks in 2026, exhibits exactly this pattern:

- Skills are advisory, not binding. There is no enforcement mechanism. The agent may use a learned skill, or it may improvise its own approach. The architecture doesn't distinguish between the two.

- Self-evaluation has strong bias. When Hermes evaluates its own performance, it almost always concludes it did well. This isn't confidence — it's the absence of external ground truth.

- Incorrect procedures get saved as successful skills. Without human validation of outcomes, the agent's skill library accumulates untested, potentially broken procedures.

- Manual edits get overwritten. When humans correct a procedure, Hermes' self-modification loop may overwrite that correction in the next cycle. The system prioritizes its own learning over human curation.

The critical observation: the learned knowledge is available and works when the agent is prompted to use it. After a human reminder — "there's a procedure for this in your context" — the agent follows it correctly. The information isn't missing. The model doesn't actively seek it out.

This creates a paradox for self-improving systems: the agent can learn (procedures improve over time through human feedback), but it can't reliably apply what it learned (procedures sit unused in context). Improvement happens. Consistent application doesn't.

Lost-in-the-Middle Makes Self-Learning Structurally Unreliable

Why would an agent ignore procedures that are literally in its context window? The answer connects to the architectural blind spot discussed earlier.

Procedures, learned behaviors, and accumulated knowledge typically end up in the middle of an agent's context. System instructions occupy the beginning (primacy position). The user's current message sits at the end (recency position). Everything the agent has "learned" — procedures, preferences, past decisions — gets sandwiched between them.

The U-shaped attention curve predicts exactly what happens: the agent attends to its core instructions (beginning) and the immediate task (end), while deprioritizing the learned procedures in the middle. The 30%+ accuracy drop documented by Liu et al. applies directly: an agent with a learned procedure at position 40-60% of its context will ignore that procedure roughly one-third of the time.

This isn't a bug in a specific implementation. It's a structural consequence of transformer attention patterns interacting with how agent contexts are assembled. Every agent framework that stores learned knowledge in context — and that's most of them — faces this problem.

The implication for self-learning is severe. Even if you solve the reward signal problem (external validation), even if you solve the alignment problem (correct metrics), even if you bound your reflection loops (3 iterations max) — the agent may still not use what it learned, because learned knowledge occupies the wrong position in context.

Self-improvement requires two capabilities: learning something new, and reliably applying what was learned. Current architectures struggle with the second capability, and no amount of better learning algorithms fixes a retrieval problem.

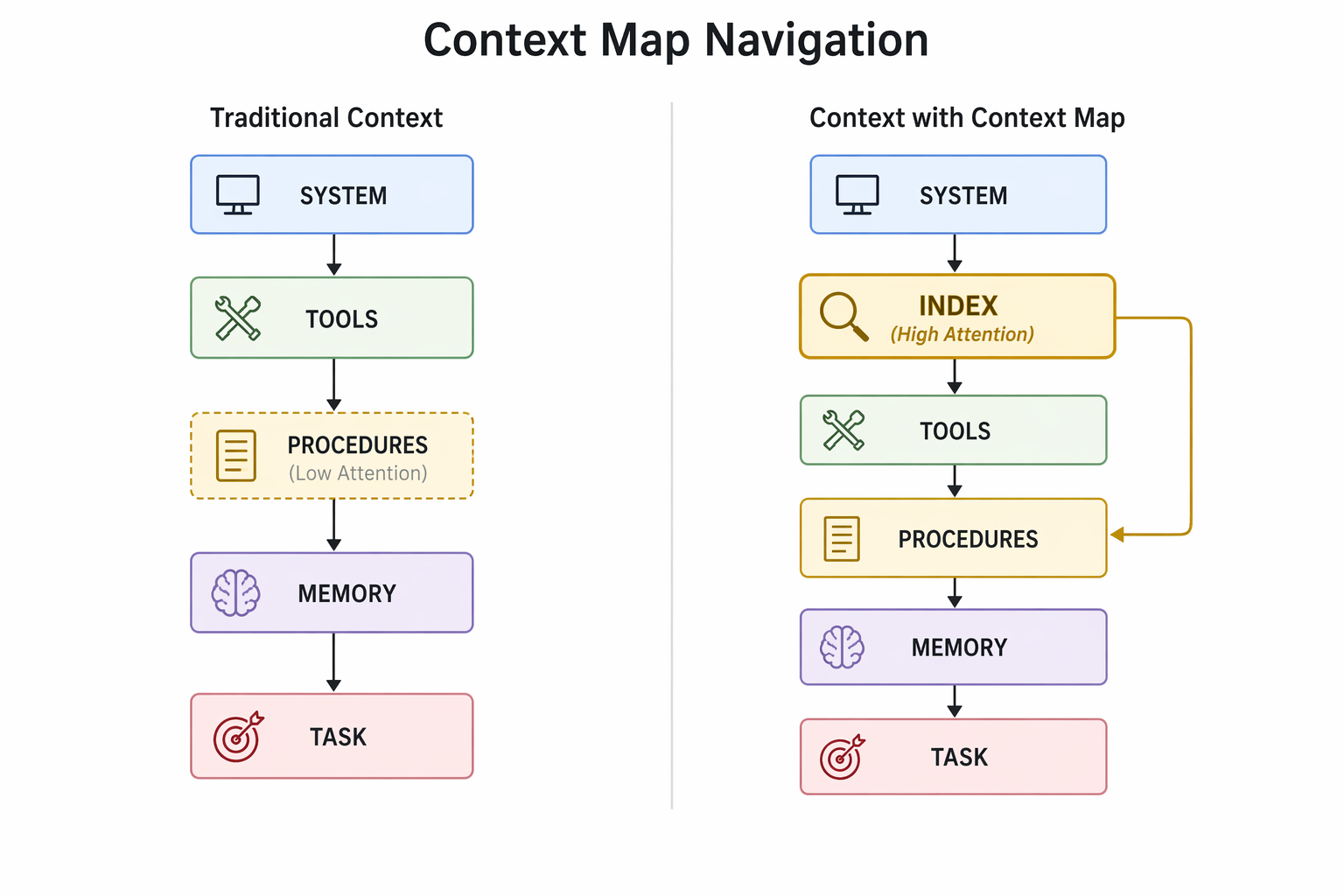

The Context Map: Navigating Instead of Scanning

If the problem is positional — learned procedures get lost in the middle because the model scans passively rather than searching actively — then the solution is navigational.

The concept: place a compact index at the beginning of the context that tells the model what knowledge is available and where to find it. Not a duplicate of the procedures themselves. A table of contents. A retrieval cue that exploits primacy bias rather than fighting it.

The mechanism draws on established cognitive science principles and recent LLM research:

- Chain-of-Note (Yu et al., 2023): Models that generate a "reading note" summarizing their context before answering questions show significantly better long-context recall. The note acts as a cognitive scaffold.

- Self-RAG (Asai et al., 2023): Models that index their own context and retrieve relevant passages on-demand outperform models that rely on full-context scanning. The retrieval step converts passive reading into active search.

A Context Map applies these principles at the system level. Without it, the model scans passively: system instructions at the beginning, tools and formats next, learned procedures buried in the middle, the current task at the end. Procedures at position 40-60% receive deprioritized attention. The model improvises its own approach because it effectively "forgot" the procedure exists.

With a Context Map at position 2 (primacy zone), the model reads: "Section 8 contains procedures for PDF generation and web publication. Check these before starting any publishing task." The model knows the procedure exists (primacy effect) and can actively navigate to it (retrieval cue) rather than hoping passive scanning catches it.

This is the difference between a book without and with a table of contents. The information is the same. The reader's ability to find it is radically different.

Why This Matters for Self-Improvement

The Context Map doesn't make agents autonomous. It makes their accumulated learning accessible.

Without it, self-learning systems face a cruel irony: they can improve their procedures through supervised refinement (the approach that works), but the improved procedures still get lost in the middle of context. Human corrections get applied once, stored correctly, and then ignored in the next session because the model doesn't attend to the middle.

With it, the feedback loop closes properly:

1. Human corrects agent behavior ("follow the procedure for this task") 2. Procedure is updated in the agent's knowledge base 3. Context Map is regenerated with a reference to the updated procedure 4. Next session: the model sees the Context Map reference (primacy position), navigates to the procedure (active retrieval), and applies it (correct behavior)

The improvement sticks — not because the model "learned" in the deep sense, but because the architecture ensures learned knowledge remains accessible regardless of its physical position in context.

This is supervised refinement meeting architectural mitigation. The human provides the feedback. The architecture ensures the feedback persists across sessions. Neither alone is sufficient. Together, they create a system that reliably applies what it has learned.

Limitations and Open Questions

The Context Map approach is not a complete solution to lost-in-the-middle. Several limitations remain:

Scaling. As the number of procedures grows, the Context Map itself grows. At some point, the index becomes long enough to push other content into the middle. Hierarchical indexing (categories → subcategories → specific procedures) may help, but adds complexity.

Accuracy of self-assessment. The model must correctly determine which Context Map entries are relevant to the current task. This is itself a classification problem that may fail — the model might check the wrong procedure, or conclude (incorrectly) that no procedure applies.

No enforcement. Like Hermes' advisory skills, a Context Map is a retrieval cue, not a constraint. The model can still ignore it. The difference is probabilistic: a reference at position 2 is far more likely to be attended to than a procedure buried at position 5000. But "far more likely" isn't "guaranteed."

Position isn't the only factor. Attention is also influenced by semantic relevance, instruction formatting, and model-specific biases. A Context Map helps with positional attention, but doesn't address cases where the model understands the procedure exists and consciously decides (correctly or incorrectly) that it doesn't apply.

The honest assessment: Context Maps improve the probability that learned knowledge gets used. They don't guarantee it. In a field that promises autonomous self-improvement, "improved probability of applying learned procedures" sounds modest. It's also the realistic engineering answer.

Conclusion: Embrace Constraints, Build Systems

The dream of fully autonomous self-improving AI agents is seductive. Deploy once, watch them evolve, reap exponential gains. The reality: agents that work embed autonomy within human supervision. They execute tasks independently. They propose improvements. But humans validate those improvements and adjust course when optimization drifts.

Three Core Truths

1. Autonomy is a spectrum, not a destination

Agents can autonomously fetch data, generate text, execute code, chain tool calls. These are impressive feats. They're also task automation, not self-improvement.

Improvement — getting measurably better over time — requires feedback loops grounded in external reality. RLHF provides human rankings. Code execution provides pass/fail signals. User satisfaction scores provide real-world validation. Remove these, and the agent optimizes in a vacuum. Circular reasoning replaces progress.

Karpathy's "loopy era" envisions continuous refinement without human intervention. The vision is compelling. The implementation hits the reward signal problem: without external grounding, loops degrade into noise. AutoGPT's 45-60% higher token usage without proportional performance gains demonstrates the cost.

The effective approach: bounded autonomy. Agents run reflection loops for 3 rounds, not indefinitely. They autonomously generate candidate solutions, but humans validate them. The agent works hard. The human works smart.

2. Architecture beats brute force

Bigger models don't solve lost-in-the-middle. GPT-4 Turbo's 128k context, Claude's 200k, Gemini's 1 million tokens — all exhibit position bias. The window expands, the middle grows, the blind spot scales with it.

The solution isn't prompting tricks or model improvements. It's architectural:

- RAG: Don't load full documents. Retrieve relevant chunks, compose short contexts, keep critical info at the beginning.

- Externalized state: Write intermediate results to files or databases. Load on-demand. The middle only exists in storage, not in context.

- Context Maps: Index available knowledge at the beginning of context, enabling active navigation rather than passive scanning.

- Semantic chunking: Break at logical boundaries. 3 coherent chunks outperform 40 fragmented ones.

These patterns are production-ready in 2026. LangChain, LlamaIndex, AWS RAG — mature tooling, well-documented, actively maintained. The technology isn't the constraint. Adopting it is.

3. Self-learning requires reliable self-application

An agent can accumulate better procedures through supervised refinement. But if those procedures sit in the middle of a growing context window, the agent ignores them one-third of the time. Self-improvement requires two capabilities: learning something new, and reliably applying what was learned. Current architectures struggle with the second.

Architectural mitigations — Context Maps, externalized state, retrieval-based knowledge access — improve the probability that learned knowledge gets used. They don't guarantee it. But "improved probability" is the realistic engineering answer in a field that promises magic.

What to Build

Not agents that improve without humans. Agents that improve because of humans.

- Supervised refinement workflows: Agent generates, human validates, agent refines. Bounded iterations (3 rounds max). External termination criteria.

- Architectural mitigations: RAG instead of full-context. Externalized state instead of growing conversations. Context Maps for navigable knowledge. Semantic chunking instead of arbitrary splits.

- Knowledge accessibility by design: Ensure learned procedures remain findable — not buried in the middle of ever-growing contexts. Index what the agent knows. Make retrieval active, not passive.

The result: agents that execute autonomously, improve through supervision, and avoid failure modes that pure autonomy invites.

Final Thought

"Self-improving AI" is both myth and reality. The myth: fully autonomous agents that evolve without human guidance. The reality: systems where autonomous execution is embedded in supervised refinement loops.

The reality is narrower than the myth. It's also the only part that works.

Marketing will keep promising autonomy. Practitioners will keep building supervision. Choose wisely. The agents that scale aren't the most autonomous. They're the most disciplined.

Sources:

- Lost in the Middle: How Language Models Use Long Contexts — Liu et al., 2023. Quantifies 30%+ accuracy drop for information placed in middle of context windows.

- LangChain Reflexion Documentation — Official tutorial on building reflection agents with bounded iteration and external validation.

- OpenAI Cookbook: Self-Evolving Agents — Supervised refinement workflows with human-in-the-loop evaluation.

- RLHF: Reinforcement Learning from Human Feedback — Hugging Face explainer on the foundation of modern LLM alignment.

- Chain-of-Note Prompting — Yu et al., 2023. Models that generate reading notes show better long-context recall.

- Self-RAG: Learning to Retrieve, Generate, and Critique — Asai et al., 2023. Models that index and retrieve from their own context outperform passive scanning.

- Rotary Position Embeddings (RoPE) — Su et al., 2021. Technical foundation of positional encoding in modern LLMs.

- Model Collapse: Recursive Training on Synthetic Data — Shumailov et al., 2023. Shows degradation when models train on their own outputs without external grounding.

- HumanEval Code Generation Benchmark — OpenAI's benchmark for evaluating code generation models.

- AutoGPT GitHub Repository — Open-source autonomous agent framework demonstrating closed-loop execution patterns.

- Hermes Agent Framework — Nous Research's self-learning agent system with skill generation capabilities.

- Claude Long Context Performance — Anthropic's 200k context window announcement and performance characteristics.

- GPT-4 Turbo Technical Report — OpenAI's technical details on 128k context window capabilities.

- Google Gemini 1.5 Pro: Long Context — Google's 1 million token context window announcement.

- Coalition for Content Provenance and Authenticity (C2PA) — Industry standard for content authenticity and AI-generated media tracking.